|

Spark consumers read from the Kafka topics and write the data to S3 at example prefixes alpha/event=A and alpha/event=B The Go service sends the messages to Kafka topics matching the event types. Phones and the VSCO website send behavioral event messages to a Go service. We call these unprocessed Parquet files “alpha” data.ĭiagram: Behavioral event origins and upstream pipeline A consumer reads batches of event messages from the topic and writes them as Apache Parquet files to AWS S3. The event message is sent to an API that publishes it to a Kafka topic. For example, editing a photo will result in an event message describing which preset was used. When people interact with VSCO on our web or mobile platforms, their actions trigger the platforms to record event messages.

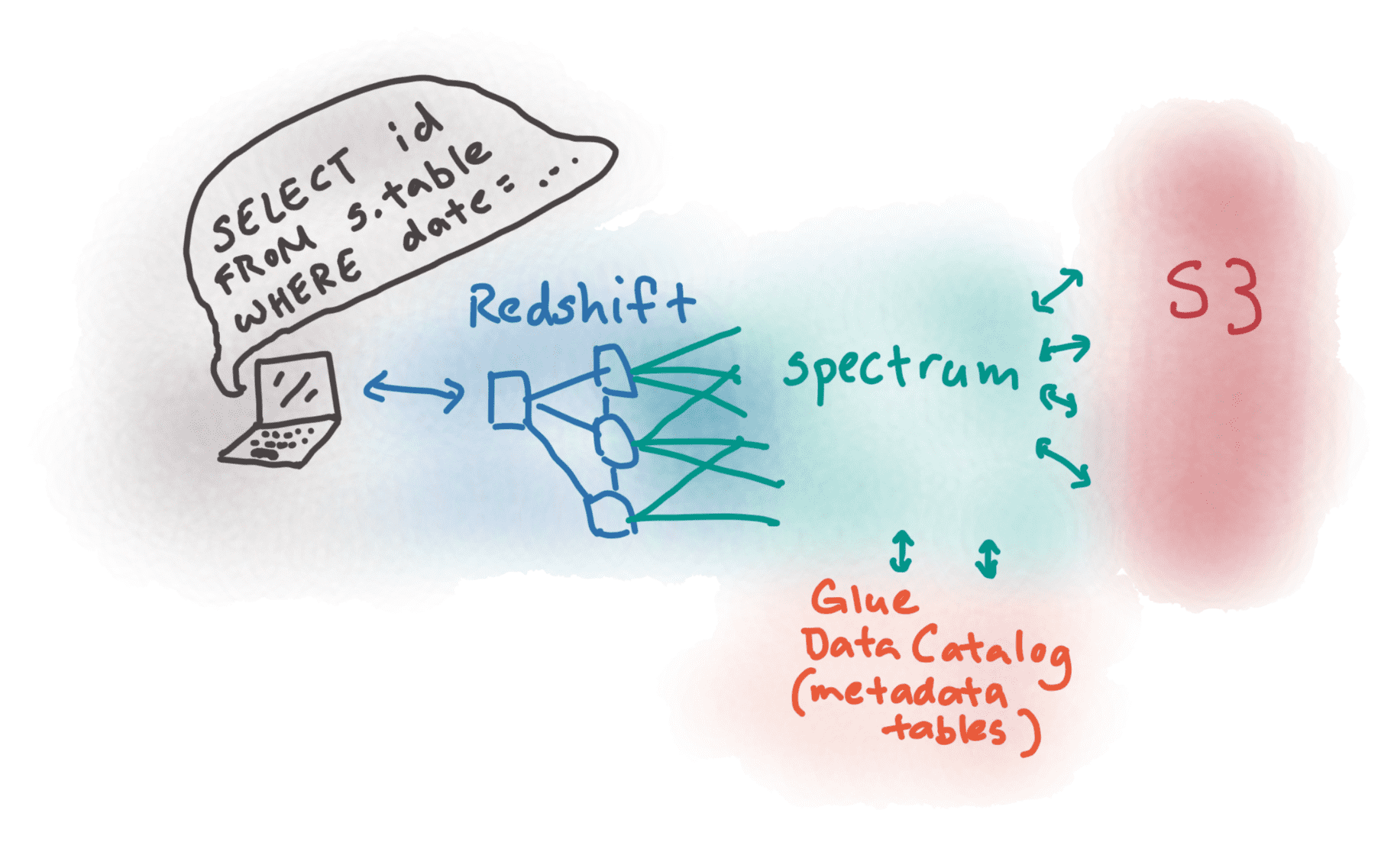

Let’s first overview the data’s upstream origins before describing the transform and load steps of that behavioral events data pipeline. Increased user engagement across VSCO’s mobile and web platforms was great news, but we wanted to limit storage costs for the rising influx of event data. In late 2018, VSCO’s behavioral event data was growing quickly. The first use case focuses on how we updated the transformation and loading of behavioral events for analytics. This piece describes steps taken to adopt Redshift Spectrum for our primary use case - behavioral events data, lists subsequent use cases, and closes with tips we’ve learned along the way. VSCO uses Amazon Redshift Spectrum with AWS Glue Catalog to query data in S3. Image of colorful lights over a subway sign by Quijano Flores

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed